Dial C* for Operator - Cass Operator Meet Reaper

Reaper is a critical tool for managing Apache Cassandra. Kubernetes-based deployments of Cassandra are no exception to this. Automation is the name of the game with Kubernetes operators. It therefore makes sense that Cass Operator should have tight integration with Reaper. Fortunately, Cass Operator v1.3.0 introduced support for Reaper. This post will take a look at what that means in practice.

Note: If you want to try the examples in this post, install Cass Operator using the instructions in the project’s README.

Pods

Before we dive into the details, let’s take a moment to talk about Kubernetes pods. If you think a pod refers to a container, you are mostly right. A pod actually consists of one or more containers that are deployed together as a single unit. The containers are always scheduled together on the same Kubernetes worker node.

Containers within a pod share network resources and can communicate with each other over localhost. This lends itself very nicely to the proxy pattern. You will find plenty of great examples of the proxy pattern implemented in service meshes.

Containers within a pod also share storage resources. The same volume can be mounted within multiple containers in a pod. This facilitates the sidecar pattern, which is used extensively for logging, among other things.

The Cassandra Pod

Now we are going to look at the pods that are ultimately deployed by Cass Operator. I will refer to them as Cassandra pods since their primary purpose is running Cassandra.

Consinder the following CassandraDatacenter:

# example-cassdc.yaml

apiVersion: cassandra.datastax.com/v1beta1

kind: CassandraDatacenter

metadata:

name: example

spec:

clusterName: example

serverType: cassandra

serverVersion: 3.11.6

managementApiAuth:

insecure: {}

size: 3

allowMultipleNodesPerWorker: true

storageConfig:

cassandraDataVolumeClaimSpec:

storageClassName: server-storage

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

Create the CassandraDatacenter as follows:

$ kubectl apply -f example-cassdc.yaml

Note: This example as well as the later one specify serverVersion: 3.11.6 for the Cassandra version. Cassandra 3.11.7 was recently released, but Cass Operator does not yet support it. See this ticket for details.

Note: Remember to create the server-storage StorageClass.

It might take a few minutes for the Cassandra cluster to fully initialize. The cluster is ready when the Ready condition in the CassandraDatacenter status reports True, e.g.,

$ kubectl -n cass-operator get cassdc example -o yaml

...

status:

cassandraOperatorProgress: Ready

conditions:

- lastTransitionTime: "2020-08-10T15:17:59Z"

status: "False"

type: ScalingUp

- lastTransitionTime: "2020-08-10T15:17:59Z"

status: "True"

type: Initialized

- lastTransitionTime: "2020-08-10T15:17:59Z"

status: "True"

type: Ready

...

Three (3) pods are created and deployed, one per Cassandra node.

$ kubectl -n get pods -l cassandra.datastax.com/cluster=example

NAME READY STATUS RESTARTS AGE

example-example-default-sts-0 2/2 Running 0 4h18m

example-example-default-sts-1 2/2 Running 0 4h18m

example-example-default-sts-2 2/2 Running 0 133m

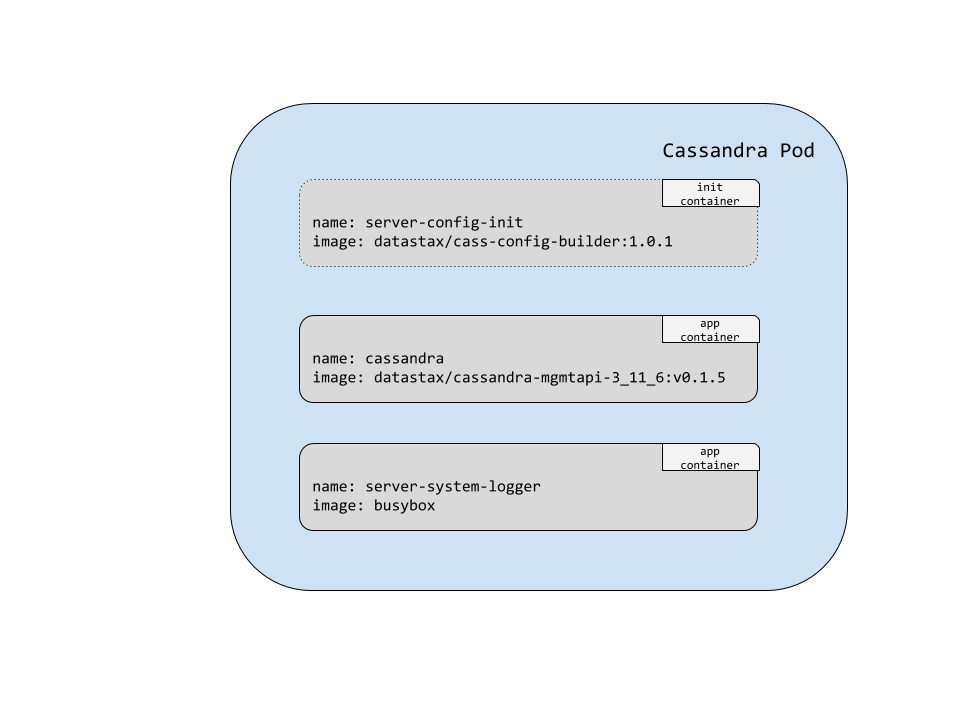

Each row in the output has 2/2 in the Ready column. What exactly does that mean? It means that there are two application containers in the pod, and both are ready. Here is a diagram showing the containers deployed in a single Cassandra pod:

This shows three containers, the first of which labled as an init container. Init containers have to run to successful completion before any of the main application containers are started.

We can use a JSONPath query with kubectl to verify the names of the application containers:

$ kubectl get pod example-example-default-sts-0 -o jsonpath={.spec.containers[*].name} | tr -s '[[:space:]]' '\n'

cassandra

server-system-logger

The cassandra container runs the Management API for Apache Cassandra, which manages the lifecycle of the Cassandra instance.

server-system-logger is a logging sidecar container that exposes Cassandra’s system.log. We can conveniently access Cassandra’s system.log using the kubectl log command as follows:

$ kubectl logs example-example-default-sts-0 -c server-system-logger

The Cassandra Pod with Reaper

Here is another CassandraDatacenter specifying that Reaper should be deployed:

# example-reaper-cassdc.yaml

apiVersion: cassandra.datastax.com/v1beta1

kind: CassandraDatacenter

metadata:

name: example-reaper

spec:

clusterName: example-reaper

serverType: cassandra

serverVersion: 3.11.6

managementApiAuth:

insecure: {}

size: 3

reaper:

enabled: true

storageConfig:

cassandraDataVolumeClaimSpec:

storageClassName: server-storage

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

The only difference from the first CassandraDatacenter are these two lines:

reaper:

enabled: true

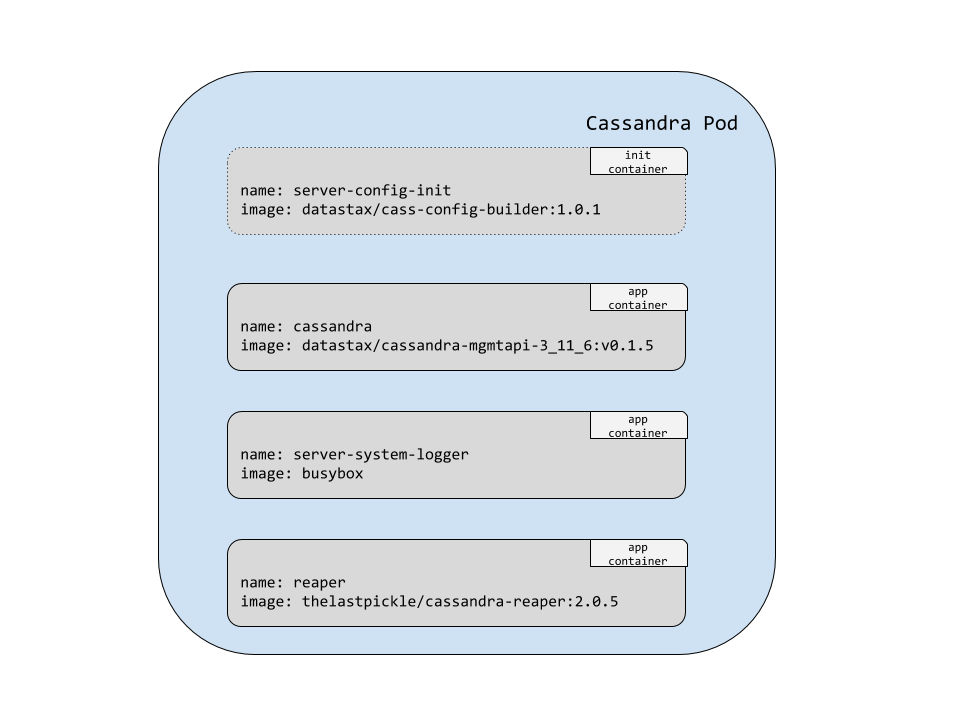

This informs Cass Operator to deploy Reaper in sidecar mode. One of the main benefits of deploying Reaper in sidecar mode is security. Reaper only needs local JMX access to perform repairs. There is no need for remote JMX access or JMX authentication to be enabled.

Once again three pods are created and deployed, one per Cassandra node.

$ kubectl -n cass-operator get pods -l cassandra.datastax.com/cluster=example-reaper

NAME READY STATUS RESTARTS AGE

example-reaper-example-reaper-default-sts-0 3/3 Running 1 6m5s

example-reaper-example-reaper-default-sts-1 3/3 Running 1 6m5s

example-reaper-example-reaper-default-sts-2 3/3 Running 1 6m4s

Now, each pod reports 3/3 in the Ready column. Here is another diagram to illustrate which containers are deployed in a single Cassandra pod:

Now we have the reaper application container in addition to the cassandra and server-system-logger containers.

Reaper Schema Initialization

In sidecar mode, Reaper automatically uses the Cassandra cluster as its storage backend. Running Reaper with a Cassandra backend requires first creating the reaper_db keyspace before deploying Reaper. Cass Operator takes care of this for us with a Kubernetes Job. The following kubectl get jobs command lists the Job that gets deployed:

$ kubectl get jobs -l cassandra.datastax.com/cluster=example-reaper

NAME COMPLETIONS DURATION AGE

example-reaper-reaper-init-schema 1/1 12s 45m

Cass Operator deploys a Job whose name is of the form <cassandradatacenter-name>-init-schema. The Job runs a small Python script named init_keyspace.py.

The output from kubectl -n cass-operator get pods -l cassandra.datastax.com/cluster=example-reaper showed one restart for each pod. Those restarts were for the reaper containers. This happened because the reaper_db keyspace had not yet been initialized.

We can see this in the log output:

$ kubectl -n cass-operator logs example-reaper-example-reaper-default-sts-1 -c reaper | grep ERROR -A 1

ERROR [2020-08-10 20:28:19,965] [main] i.c.ReaperApplication - Storage is not ready yet, trying again to connect shortly...

com.datastax.driver.core.exceptions.InvalidQueryException: Keyspace 'reaper_db' does not exist

The restarts are perfectly fine as there are no ordering guarantees with the start of application containers in a pod.

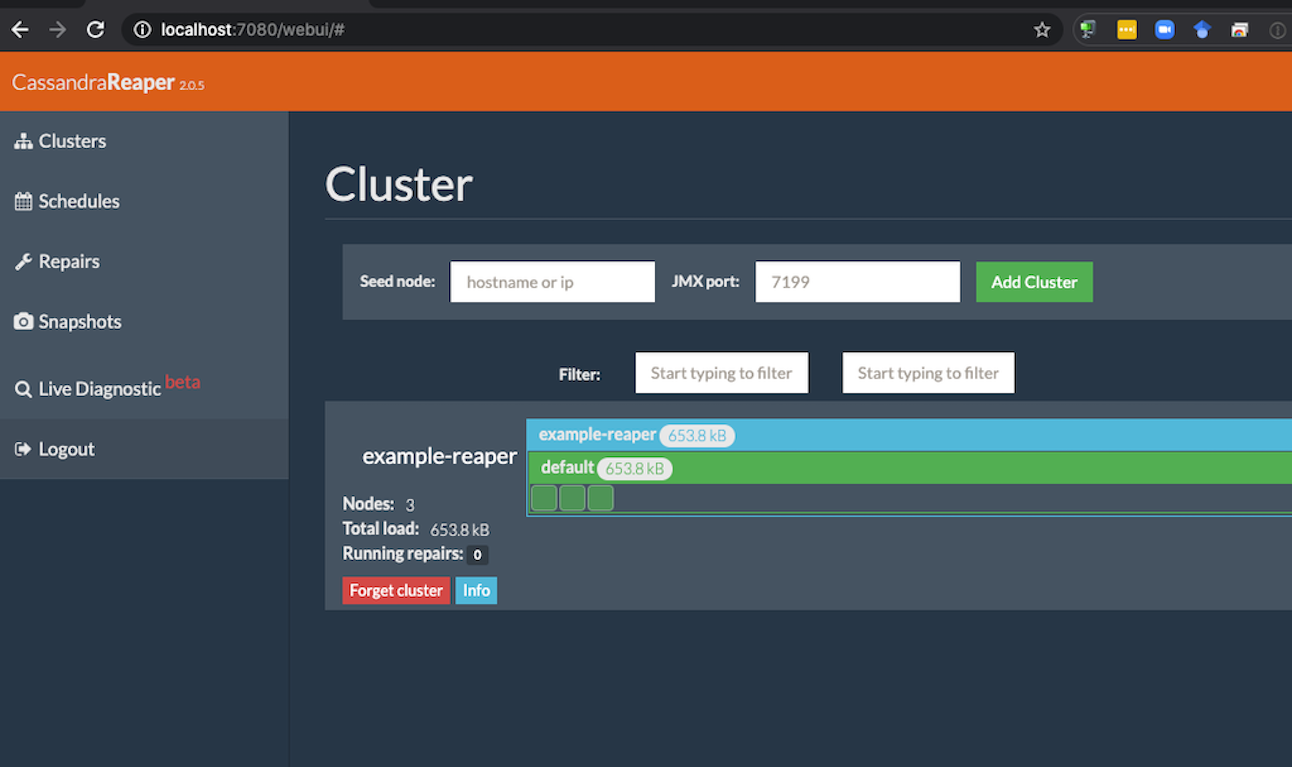

Accessing the Reaper UI

Reaper provides a rich UI that allows you to do several things including:

- Monitor Cassandra clusters

- Schedule repairs

- Manager and monitor repairs

Cass Operator deploys a Service to expose the UI. Here are the Services that Cass Operator deploys.

$ kubectl -n cass-operator get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

cass-operator-metrics ClusterIP 10.0.37.211 <none> 8383/TCP,8686/TCP 8h

cassandradatacenter-webhook-service ClusterIP 10.0.33.233 <none> 443/TCP 8h

example-reaper-example-reaper-all-pods-service ClusterIP None <none> <none> 14m

example-reaper-example-reaper-service ClusterIP None <none> 9042/TCP,8080/TCP 14m

example-reaper-reaper-service ClusterIP 10.0.47.8 <none> 7080/TCP 10m

example-reaper-seed-service ClusterIP None <none> <none> 14m

The Service we are interested in has a name of the form <clusterName>-reaper-service which is example-reaper-reaper-service. It exposes the port 7080.

One of the easiest ways to access the UI is with port forwarding.

$ kubectl -n cass-operator port-forward svc/example-reaper-reaper-service 7080:7080

Forwarding from 127.0.0.1:7080 -> 7080

Forwarding from [::1]:7080 -> 7080

Handling connection for 7080

Here is a screenshot of the UI:

Our example-reaper cluster shows up in the cluster list because it gets automatically registered when Reaper runs in sidecar mode.

Accessing the Reaper REST API

Reaper also provides a REST API in addition to the UI for managing clusters and repair schedules. It listens for requests on the ui port which means it is accessible as well through example-reaper-reaper-service. Here is an example of listing registered clusters via curl:

$ curl -H "Content-Type: application/json" http://localhost:7080/cluster

["example-reaper"]

Wrap Up

Reaper is an essential tool for managing Cassandra. Future releases of Cass Operator may make some settings such as resource requirements (i.e., CPU, memory) and authentication/authorization configurable. It might also support deploying Reaper with a different topology. For example, instead of using sidecar mode, Cass Operator might provide the option to deploy a single Reaper instance. This integration is a big improvement in making it easier to run and manage Cassandra in Kubernetes.