Reaper 3.0 for Apache Cassandra was released

The K8ssandra team is pleased to announce the release of Reaper 3.0. Let’s dive into the main new features and improvements this major version brings, along with some notable removals.

Storage backends

Over the years, we regularly discussed dropping support for Postgres and H2 with the TLP team. The effort for maintaining these storage backends was moderate, despite our lack of expertise in Postgres, as long as Reaper’s architecture was simple. Complexity grew with more deployment options, culminating with the addition of the sidecar mode.

Some features require different consensus strategies depending on the backend, which sometimes led to implementations that worked well with one backend and were buggy with others.

In order to allow building new features faster, while providing a consistent experience for all users, we decided to drop the Postgres and H2 backends in 3.0.

Apache Cassandra and the managed DataStax Astra DB service are now the only production storage backends for Reaper. The free tier of Astra DB will be more than sufficient for most deployments.

Reaper does not generally require high availability - even complete data loss has mild consequences. Where Astra is not an option, a single Cassandra server can be started on the instance that hosts Reaper, or an existing cluster can be used as a backend data store.

Adaptive Repairs and Schedules

One of the pain points we observed when people start using Reaper is understanding the segment orchestration and knowing how the default timeout impacts the execution of repairs.

Repair is a complex choreography of operations in a distributed system. As such, and especially in the days when Reaper was created, the process could get blocked for several reasons and required a manual restart. The smart folks that designed Reaper at Spotify decided to put a timeout on segments to deal with such blockage, over which they would be terminated and rescheduled.

Problems arise when segments are too big (or have too much entropy) to process within the default 30 minutes timeout, despite not being blocked. They are repeatedly terminated and recreated, and the repair appears to make no progress.

Reaper did a poor job at dealing with this for mainly two reasons:

- Each retry will use the same timeout, possibly failing segments forever

- Nothing obvious was reported to explain what was failing and how to fix the situation

We fixed the former by using a longer timeout on subsequent retries, which is a simple trick to make repairs more “adaptive”. If the segments are too big, they’ll eventually pass after a few retries. It’s a good first step to improve the experience, but it’s not enough for scheduled repairs as they could end up with the same repeated failures for each run.

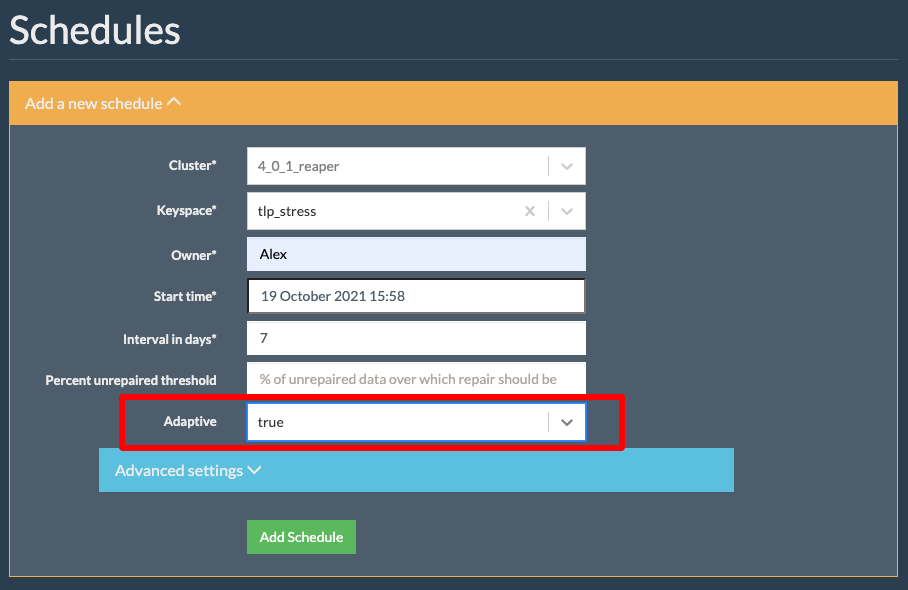

This is where we introduce adaptive schedules, which use feedback from past repair runs to adjust either the number of segments or the timeout for the next repair run.

Adaptive schedules will be updated at the end of each repair if the run metrics justify it. The schedule can get a different number of segments or a higher segment timeout depending on the latest run.

The rules are the following:

- if more than 20% segments were extended, the number of segments will be raised by 20% on the schedule

- if less than 20% segments were extended (and at least one), the timeout will be set to twice the current timeout

- if no segment was extended and the maximum duration of segments is below 5 minutes, the number of segments will be reduced by 10% with a minimum of 16 segments per node.

This feature is disabled by default and is configurable on a per schedule basis. The timeout can now be set differently for each schedule, from the UI or the REST API, instead of having to change the Reaper config file and restart the process.

Incremental Repair Triggers

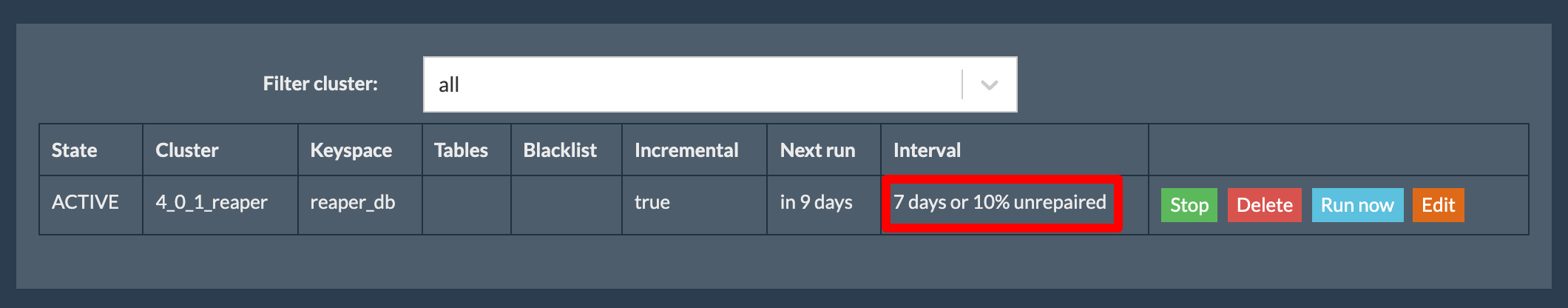

As we celebrate the long awaited improvements in incremental repairs brought by Cassandra 4.0, it was time to embrace them with more appropriate triggers. One metric that incremental repair makes available is the percentage of repaired data per table. When running against too much unrepaired data, incremental repair can put a lot of pressure on a cluster due to the heavy anticompaction process.

The best practice is to run it on a regular basis so that the amount of unrepaired data is kept low. Since your throughput may vary from one table/keyspace to the other, it can be challenging to set the right interval for your incremental repair schedules.

Reaper 3.0 introduces a new trigger for the incremental schedules, which is a threshold of unrepaired data. This allows creating schedules that will start a new run as soon as, for example, 10% of the data for at least one table from the keyspace is unrepaired.

Those triggers are complementary to the interval in days, which could still be necessary for low traffic keyspaces that need to be repaired to secure tombstones.

These new features will allow to securely optimize tombstone deletions by enabling the only_purge_repaired_tombstones compaction subproperty in Cassandra, permitting to reduce gc_grace_seconds down to 3 hours without fearing that deleted data reappears.

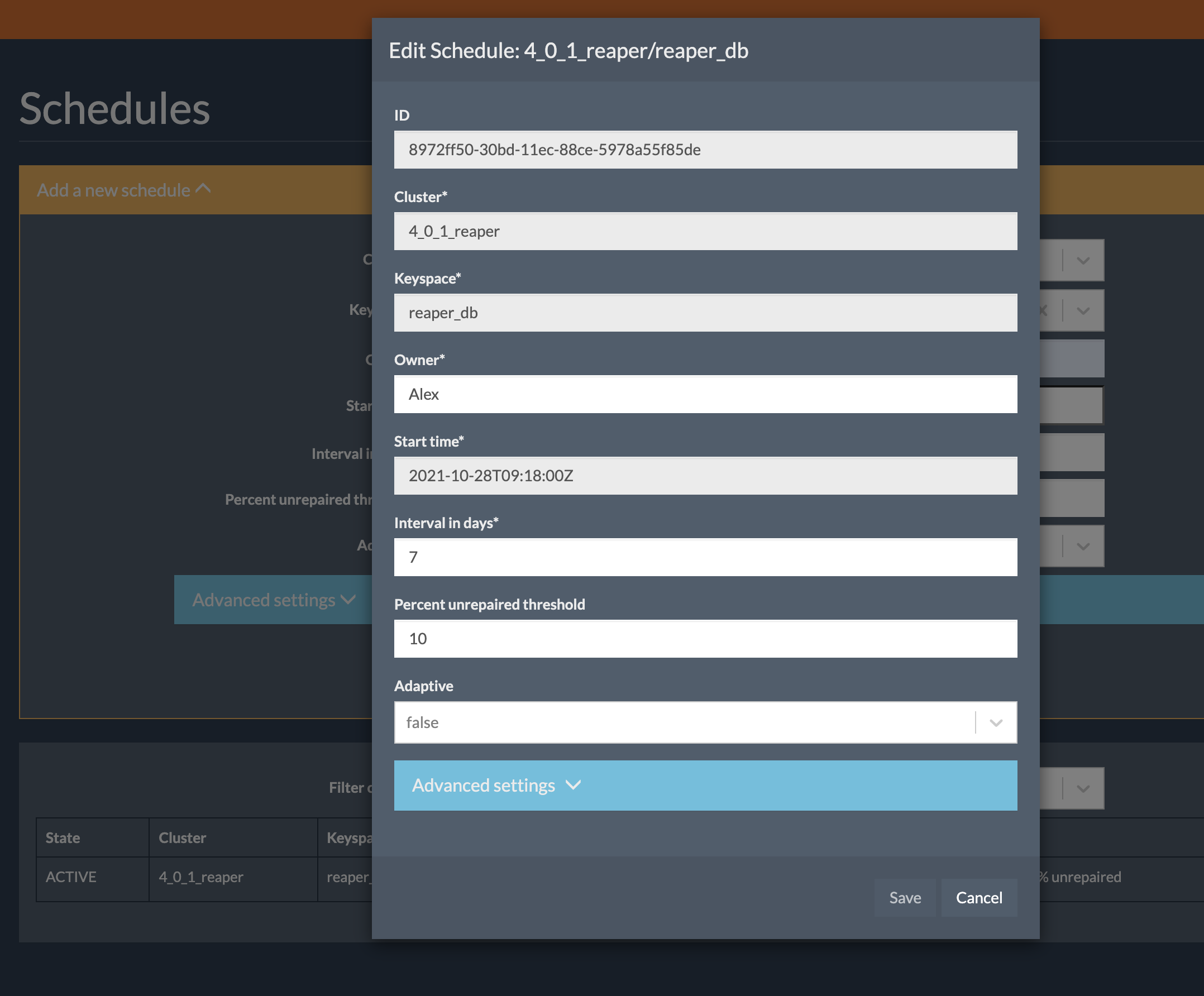

Schedules can be edited

That may sound like an obvious feature but previous versions of Reaper didn’t allow for editing of an existing schedule. This led to an annoying procedure where you had to delete the schedule (which isn’t made easy by Reaper either) and recreate it with the new settings.

3.0 fixes that embarrassing situation and adds an edit button to schedules, which allows to change the mutable settings of schedules:

More improvements

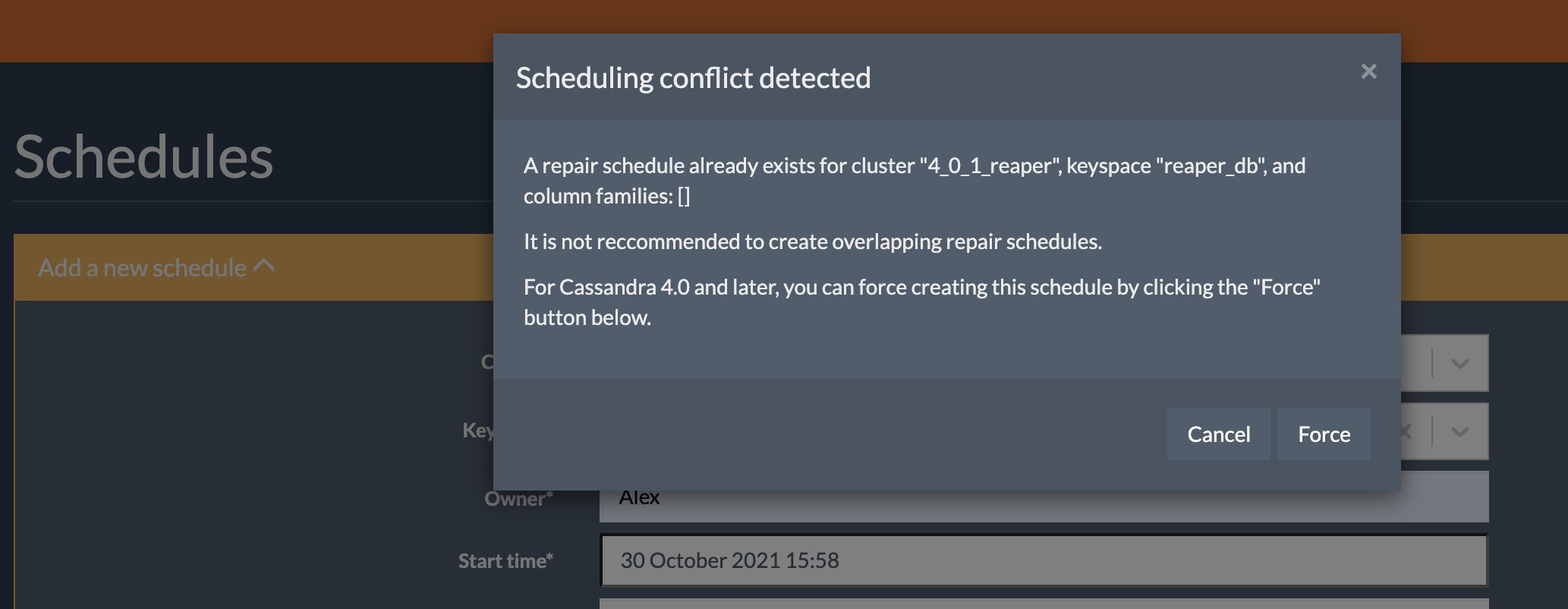

In order to protect clusters from running mixed incremental and full repairs in older versions of Cassandra, Reaper would disallow the creation of an incremental repair run/schedule if a full repair had been created on the same set of tables in the past (and vice versa).

Now that incremental repair is safe for production use, it is necessary to allow such mixed repair types. In case of conflict, Reaper 3.0 will display a pop up informing you and allowing to force create the schedule/run:

We’ve also added a special “schema migration mode” for Reaper, which will exit after the schema was created/upgraded. We use this mode in K8ssandra to prevent schema conflicts and allow the schema creation to be executed in an init container that won’t be subject to liveness probes that could trigger the premature termination of the Reaper pod:

java -jar path/to/reaper.jar schema-migration path/to/cassandra-reaper.yaml

There are many other improvements and we invite all users to check the changelog in the GitHub repo.

Upgrade Now

We encourage all Reaper users to upgrade to 3.0.0, while recommending users to carefully prepare their migration out of Postgres/H2. Note that there is no export/import feature and schedules will need to be recreated after the migration.

All instructions to download, install, configure, and use Reaper 3.0.0 are available on the Reaper website.