Reaper 1.3 Released

Cassandra Reaper 1.3 was released a few weeks ago, and it’s time to cover its highlights.

Configurable pending compactions threshold

Reaper protects clusters from being overloaded by repairs by not submitting new segments that involve a replica with more than 20 pending compactions. This usually means that nodes aren’t keeping up with streaming triggered by repair and running more repair could end up seriously harming the cluster.

While the default value is a good one, there may be cases where one would want to tune the value to match its specific needs. Reaper 1.3.0 allows this by adding a new configuration setting to add in your yaml file:

maxPendingCompactions: 50

Use this setting with care and for specific cases only.

More nodes metrics in the UI

Following up on the effort to expand the capabilities of Reaper beyond the repair features, the following informations are now available in the UI (and through the REST API), when clicking on a node in the cluster view.

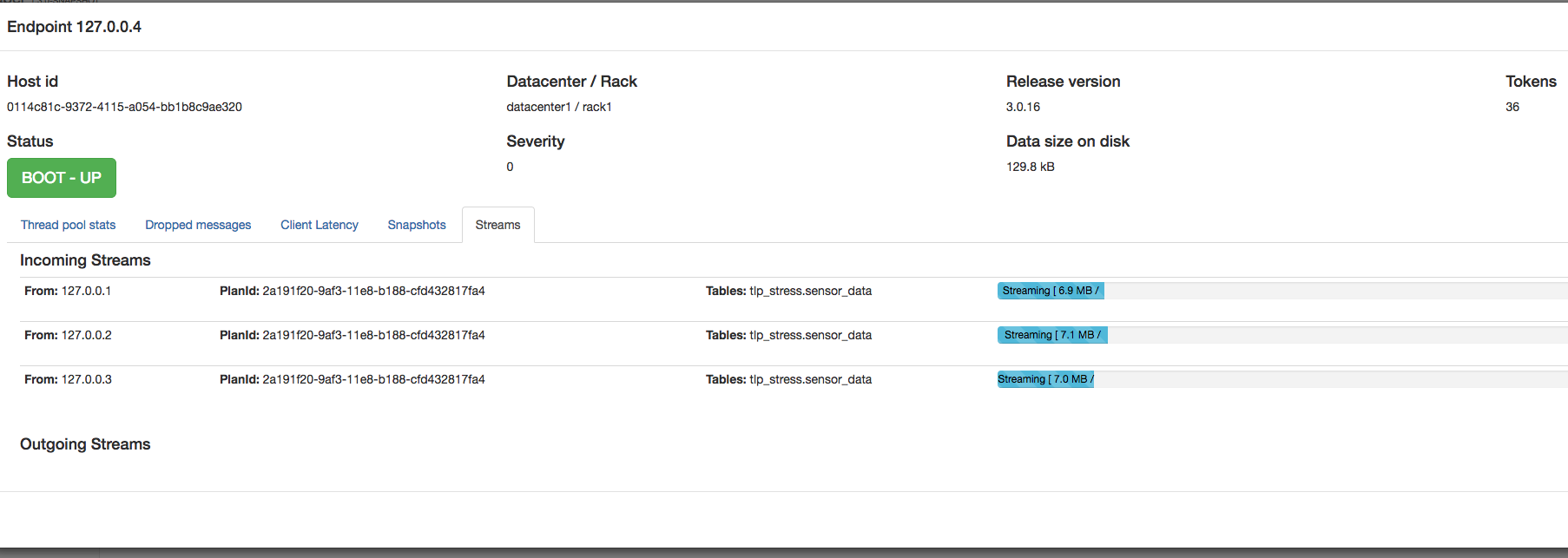

Progress of incoming and outgoing streams is now displayed:

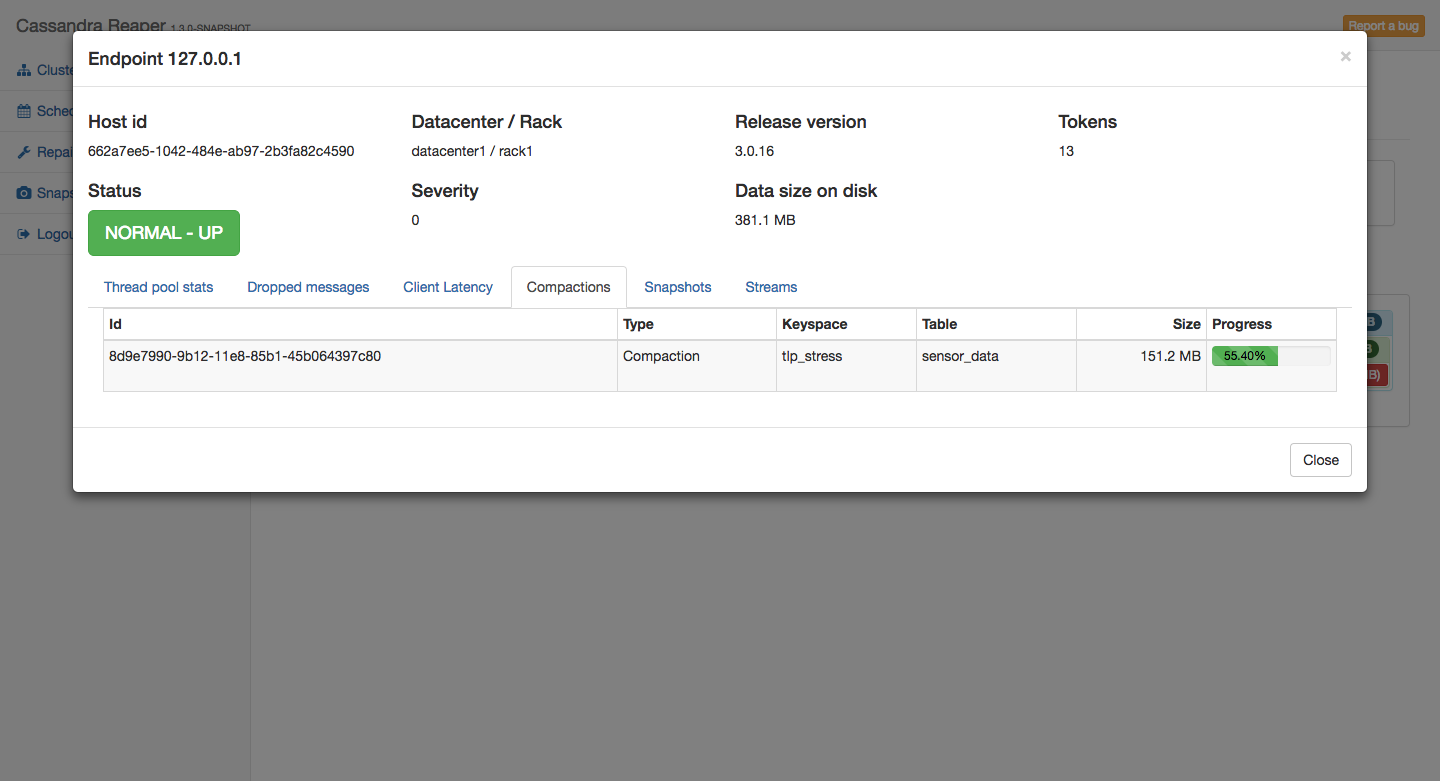

Compactions can be tracked:

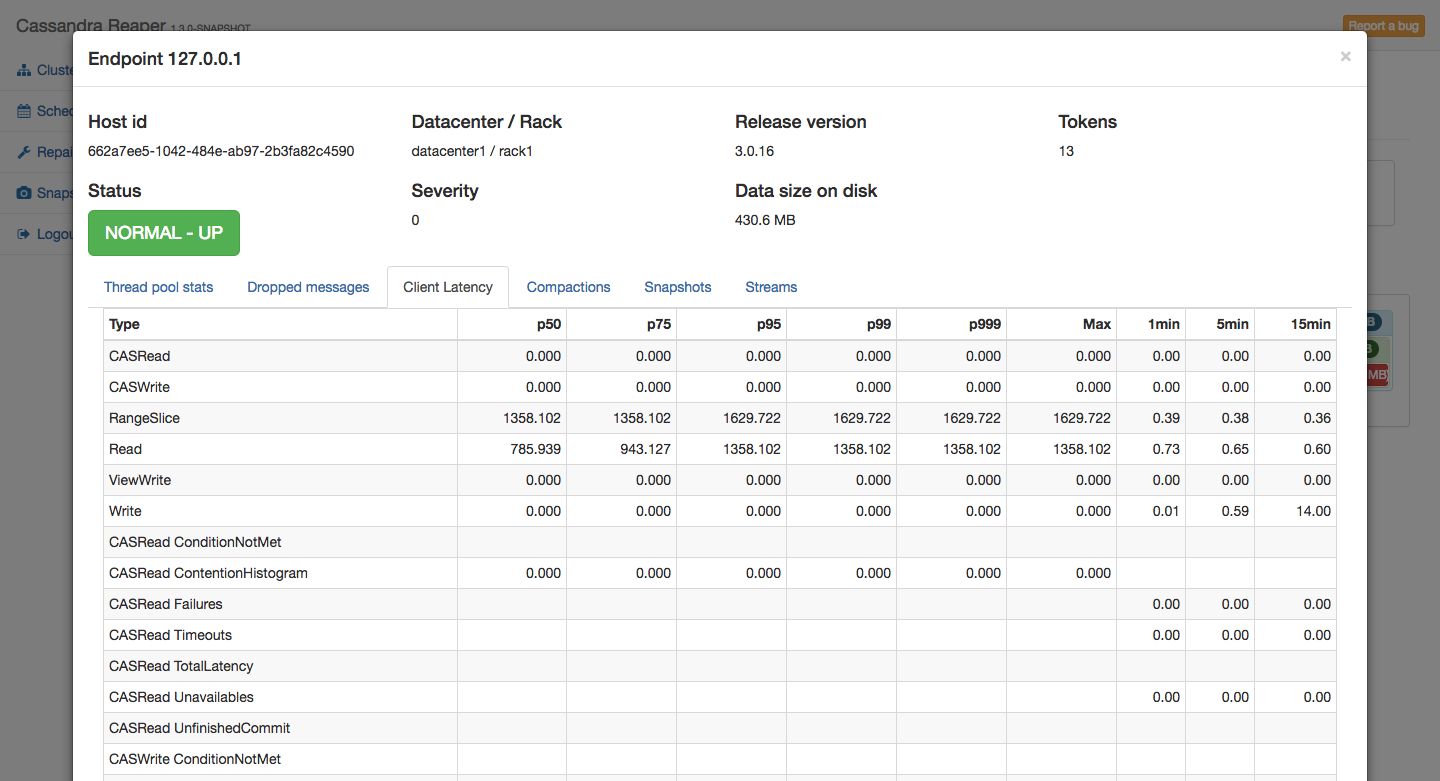

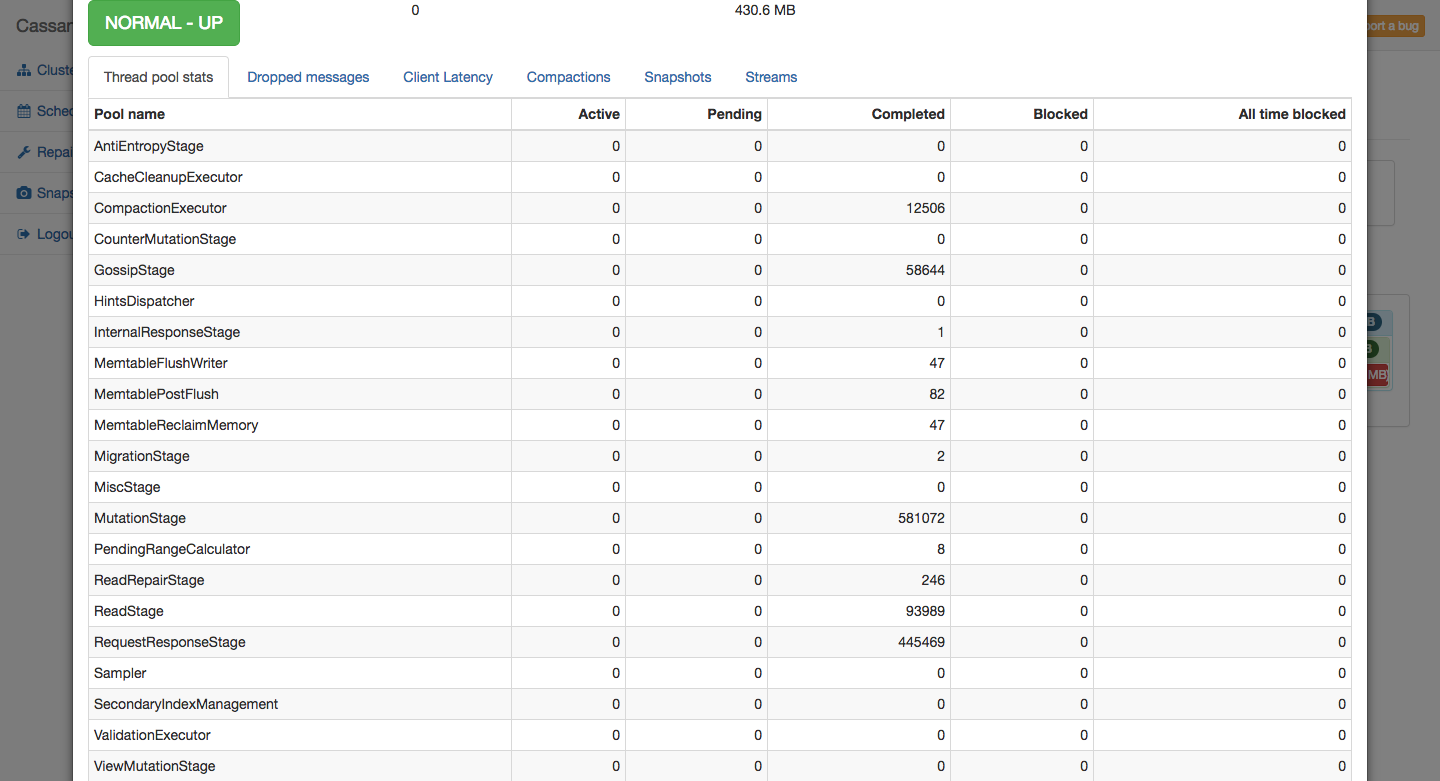

And both thread pool stats and client latency metrics are displayed in tables:

What’s next for Reaper?

As shown in a previous blog post, we’re working on an integration with Spotify’s cstar in order to run and schedule topology aware commands on clusters managed by Reaper.

We are also looking to add a sidecar mode, which would collocate an instance of Reaper on each Cassandra node, allowing to use Reaper on clusters where JMX access is restricted to localhost.

The upgrade to 1.3 is recommended for all Reaper users. The binaries are available from yum, apt-get, Maven Central, Docker Hub, and are also downloadable as tarball packages. Remember to backup your database before starting the upgrade.

All instructions to download, install, configure, and use Reaper 1.3 are available on the Reaper website.